5G Wireless and Beyond: From Evolution to Revolution

Published Date

By:

- Tiffany Fox

- Liezel Labios

Share This:

Article Content

Center for Wireless Communications Director Sujit Dey coordinated the 5G & Beyond Forum, which focused on three 5G applications: connected health, new immersive media experiences (including artificial intelligence and virtual reality) and the Internet of Things, a “smart” connected world at the confluence of sensing technologies and machine learning. To see more photos of the 5G & Beyond forum, click here.

From a technical standpoint, fifth generation mobile wireless – or 5G, as it’s commonly known – is more about “evolution” than “revolution.” In many ways 5G simply builds upon the mobile infrastructure established by the current wireless standard, 4G LTE. From the standpoint of the imagination, however, 5G is poised to reshape the technological world as we know it.

The new standard – which is expected to be deployed by 2020 – will support data transfer rates of between 10 and 20 gigabits per second per mobile base station, at speeds 10 to 100 times faster than typical 4G connections. These capabilities will make it possible to harness sensor technologies, virtual reality, artificial intelligence and machine learning for unprecedented applications – applications discussed at length in a series of forums and technical presentations at 5G & Beyond, held at the University of California San Diego, Qualcomm Institute.

Electrical and Computer Engineering Professor Sujit Dey directs the UC San Diego Center for Wireless Communications (CWC) and coordinated the conference. He noted that the CWC is focused on three applications: connected health, new immersive media experiences (including artificial intelligence and virtual reality) and the Internet of Things, a “smart” connected world at the confluence of sensing technologies and machine learning.

“In all of these things, the common question is how will the new 5G network support these applications,” added Dey. “Our 5G & Beyond Forum was designed to address not only these new advances but also what they will require, what 5G can provide, and what will it take to make it all happen soon, given that 5G is racing toward commercial deployment in next two years and we’re already talking about 6G.”

Some of the biggest players in wireless applications and technology attended 5G & Beyond, including more than 100 representatives from Qualcomm, Cisco, Microsoft, Samsung, Keysight Technologies, Kaiser Permanente, ViaSat and several other major industry players.

Some of the biggest players in wireless applications and technology attended 5G & Beyond, including more than 100 representatives from Qualcomm, Cisco, Microsoft, Samsung, Keysight Technologies, Kaiser Permanente, ViaSat and several other major industry players. Sessions included talks on the progress and challenges of 5G as well as the impact of networks and devices on future healthcare-delivery models, connected and autonomous vehicles and other 5G applications. The forum was followed by a planning meeting to, as Dey put it, “synthesize all those opinions, provide further opinions about what we should do, and with valuable input from our industry members shape our future research projects.”

Several keynote presentations provided a high-level overview of the future of 5G, including a talk by Durga Malladi, Senior Vice President of Engineering at Qualcomm. In his talk, “Making 5G New Radio (NR) a Reality,” Malladi described 5G as a “unifying connectivity fabric” for enhanced mobile broadband as well as mission-critical services (those that demand extremely tight latency bound and/or extremely high reliability) and the massively connected world, the Internet of Things (IoT).

Noting that 5G-related goods and services are expected to amount to a $12 trillion industry in the year 2035, Malladi encouraged audience members to remember that “our job is to build the right technical elements so that others can exploit the extremely high data rates – in the order of 5 gigabits per second – and self-imposed low latencies of less than one millisecond that are possible with 5G.” Malladi cited a number of emerging and unforeseen use cases that will be made possible by 5G, including immersive entertainment and experiences like virtual reality, sustainable cities and infrastructure, improved public safety, more autonomous manufacturing, and safer vehicular communications.

“Today almost all vehicles are connected in one way or another,” he added by way of example. “Either the vehicle itself is connected to the network (via onboard computing systems) or the person driving it is connected to the network (via mobile phone). Autonomous cars require vehicle-to-vehicle communication, but what if, through vehicle to infrastructure communication, we made speed limits adaptive so they changed based on traffic patterns?”

Reliable access to remote healthcare is another 5G application highly anticipated by both engineers and clinical providers. Alex Gao, head of the Digital Health Lab at Samsung Research America, said his team is working toward transforming consumer-grade appliances “into a guardian-angel experience for a specific disease state” by harnessing the immersive experience and massive connectivity enabled by 5G for digitized care calendars, compliance checking and remote biosensing.

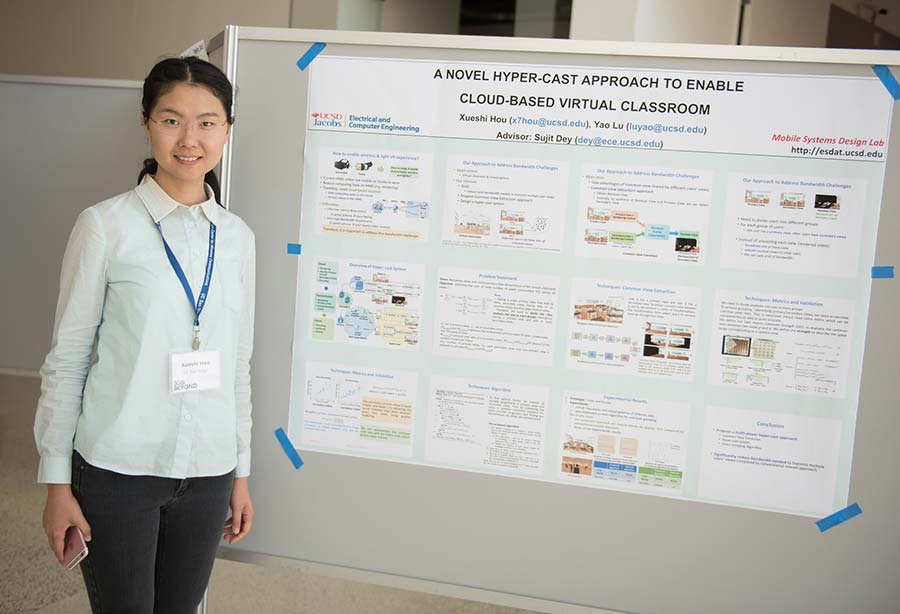

Xusehi Hou of UC San Diego exhibits her poster on “A Novel Hypercast Approach to Enable Cloud-Based Virtual Classroom” at 5G & Beyond. The technology’s capabilities will make it possible to harness sensor technologies, virtual reality, artificial intelligence and machine learning for unprecedented applications.

Machine-learning and artificial intelligence will also factor heavily into capturing these novel patient insights, as evidenced by remarks by Research Engineer Ayan Acharya of Cognitive Scale.

“It’s not enough to do data analytics in a silo and hand the report to the product or business managers,” Acharya noted. “You have to provide some sensible outcome or action that will go to the patients themselves. We have to invest heavily on machine-learning models that are interpretable and can identify patient preferences and their needs by analyzing public, private and third-party data to provide insights on what actions to take next.”

Doing this will require what one speaker referred to as “the edge eating the cloud” – a shift toward machine-learning at the ‘edge’ of the network (on a connected device, for example) and then filtering out key insights to give the patient actionable data. This model addresses some of the privacy concerns inherent in sending personal health data to the cloud, where the edge will perform data retrieval locally and send non-private information to the cloud where it is analyzed globally.

It also addresses one of the greatest challenges of achieving an ultra-connected world: Spectrum, or the ability to provide massive connectivity, to all connections, all the time, without creating scenarios where mission-critical services are competing for bandwidth. “Trillions of sensors are sending chatty data to the cloud,” explained Dey, “and this is not tenable because it represents trillions and trillions of unnecessary connections.”

In his talk titled, “Hybrid Edge Analytics for Real-Time, Personalized Virtual Care,” Dey discussed research UC San Diego is conducting with partners such as Kaiser Permanente to create a heterogeneous network system comprised of diverse access technologies coexisting and helping together. “But also,” Dey added, “a computational hierarchical architecture that includes storage and analytics helping at the edge – both the core mobile network edge, the base stations, and the edge right in our homes and physical spaces.”

“This way, we can address this big problem that we don’t need to send all this data all the way to the cloud. This helps in terms of number of connections, bandwidth and how we leverage this platform to do analytics” says Dey.

Meeting the Massive Challenges of Massive MIMO

Marcos Tavares of Bell Labs (left) chats with UC San Diego Professor of Electrical Engineering Bhaskar Rao at the demo session during the 5G & Beyond Forum.

Maximizing spectrum to enable these 5G applications has been the particular focus of a number of researchers in the UC San Diego Department of Electrical and Computer Engineering, particularly in the area of massive MIMO. Multi-input, multi-output (MIMO) technology equips cellular base stations with multiple antennas to enhance capacity and transmit parallel data streams to users on the same frequency. By using multiple antennas, MIMO base stations could also beam-form, where the antenna beam could focus on individual users to enhance connectivity and reduce interference.

A big challenge inherent to massive MIMO, however, is that it requires a large number of channels to be estimated in a limited time, both for uplink and downlink communications. In his talk during a session on massive MIMO, Electrical Engineering Professor Bhaskar Rao presented approaches for overcoming this challenge in several scenarios: TDD (time-division duplex), FDD (frequency-division duplex), and low-complexity architectures. For TDD, Rao’s approach is to perform a semi-blind channel estimation. For FDD, Rao suggests a compressed sensing framework that involves low dimensional channel representation using dictionaries as well as exploiting the sparsity of the channel. For low-complexity architectures -- particularly one-bit massive MIMO -- Rao’s research group is exploring something known as ‘angle of arrival estimation-based modeling’ for mmWave communications.

Reducing power consumption in massive MIMO systems was the focus of another talk given by Professor Dey. His talk explored energy-efficient base station architectures called hybrid beamforming architectures. A feature of these architectures is they can use a smaller number of RF (radio frequency) chains than the number of antennas. Fewer RF chains mean less power consumption. A challenge remains, however: identifying the optimal number of RF chains needed to minimize power consumption while satisfying user requirements. Dey and his team developed a machine-learning model to calculate this number, resulting in a method that can save approximately half the power compared to when all the RF chains are being used.

Continuing the Quest for Low-Power, Low-Cost Circuitry

Also essential for the transmission and reception of wireless signals are Phase locked loops (PLLs), which are used to generate very precise local oscillator signals. Spurious tones are a form of error that plague PLLs and can limit wireless transceiver performance. Electrical Engineering Professor Ian Galton presented a PLL integrated circuit (IC) – enabled by a recent invention from his lab – that achieves record-setting spurious tone performance.

Galton’s research group developed a “drop-in” replacement for the digital delta-sigma modulators currently used in most PLLs. The drop-in replacement enables better spurious tone performance than possible with digital delta-sigma modulators. The drop-in replacement is called a successive requantizer, and is based on new signal processing algorithms developed by his group. Galton noted that the increased performance of 5G relative to prior generations requires improved PLLs, and spurious tones in particular are expected to be of significant concern for the local oscillator PLLs in 5G transceivers.

Power amplifiers are critical elements of the hardware mix in 5G systems, since they directly influence the overall power dissipation. Electrical Engineering Professor Peter Asbeck’s research is directed at developing 5G power amplifiers with high efficiency, high linearity and wide bandwidth. One of the achievements is the demonstration of an all GaN multiband Envelope Tracking power amplifier that achieves a record 80 MHz signal bandwidth while covering a wide range of carrier frequencies (0.7–2.2 GHz). This work was done by a team of researchers at CWC, Mitsubishi Electric and Nokia Bell Labs. Other results highlighted in Asbeck’s talk include a CMOS Class G/Voltage-Mode-Doherty amplifier with efficiency peaking (18 %) at 12 dB backoff (covering 3–4 GHz) and with a simple memoryless look-up table, which achieves 1 % error-vector magnitude (suitable for 256 QAM OFDM).

Asbeck’s work has also led to wide bandwidth modulation of a CMOS 28 GHz amplifier with up to 64 QAM signals with 5 GHz bandwidth, achieving 30 Gb/s, while maintaining output power of 17 dBm and 15 % power-added efficiency, without using any digital predistortion. He also demonstrated that on-chip analog predistortion in a CMOS Doherty amplifier achieves good gain flatness up to a saturation power of 25 dBm, with 23.5 % power-added efficiency at 6 dB backoff without using any digital predistortion. The work shows that CMOS may provide enough power for most 5G applications.

Another power-saving approach was demonstrated with an ultra-low power wake-up radio developed by the teams of electrical engineering professors Drew Hall, Patrick Mercier, Young-Han Kim and Gabriel Rebeiz. In conventional radios, most power is spent waiting for a signal to perform a function. This essentially decreases the lifetime of IoT devices. UC San Diego researchers are exploring replacing conventional radios with a wake-up radio that’s designed to always be on while consuming “near-zero” power. This new wake-up radio uses only 4.5 nW of power, which is essentially the leakage power of a coin-cell battery, while achieving -69 dBm sensitivity.

Another big challenge in the field of 5G is making affordable phased-arrays for 5G applications, both at 28 GHz and at 60 GHz. These arrays of antennas are capable of steering signals in a desired direction by shifting the phase of the signal emitted from each radiating element and providing constructive/destructive interference. Electrical engineering professor Gabriel Rebeiz’s presentation at the 5G Forum highlighted his group’s recent progress in this area. Using commercial techniques, namely the integration of SiGe with antennas on a multi-layer printed circuit board, Rebeiz and his team have built affordable, high-performance phased-arrays that operate at record-setting data rates (Gbps communication links) without calibration, achieving 50 dBm EIRP at 30 GHz.

Keynote presenter Mark Pierpoint, Vice President and General Manager of Internet Infrastructure Solutions for Keysight Technologies, acknowledged that phased arrays have great potential and included in his own presentation data plots from Rebeiz’s students’ work in developing steering beams. Pierpoint noted, however, that “there’s a lot more work to do, particularly in knowing how to operate them. We believe that the combination of new research and applications is going to solve these problems. We’re into some really rich times over the next decade, I believe.”

Share This:

You May Also Like

Stay in the Know

Keep up with all the latest from UC San Diego. Subscribe to the newsletter today.