More Realistic Simulated Cloth for More Realistic Video Games and Movies

Computer scientists develop new model to simulate cloth on a computer with unprecedented accuracy

By:

- Ioana Patringenaru

Published Date

By:

- Ioana Patringenaru

Share This:

Article Content

Computer scientists at the University of California, San Diego, have developed a new model to simulate with unprecedented accuracy on the computer the way cloth and light interact. The new model can be used in animated movies and in video games to make cloth look more realistic.

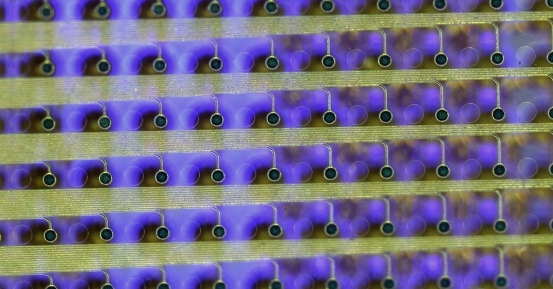

Simulated fabrics lit by a square area light. Image credit: Iman Sadeghi, et. al/Jacobs School of Engineering/UC San Diego

Existing models are either too simplistic and produce unrealistic results; or too complex and costly for practical use. Researchers presented their findings at the SIGGRAPH 2013 conference held July 21 to 25 in Anaheim, Calif.

“Not only is our model easy to use, it is also more powerful than existing models,” said Iman Sadeghi, who developed the model while working on his Ph.D. in the Department of Computer Science and Engineering at UC San Diego. He currently works for Google in Los Angeles, after earning his Ph.D. in 2011.

“The model solves the long standing problem of rendering cloth,” said Sadeghi’s Ph.D. advisor Henrik Wann Jensen, who earned an Academy Award in 2004 for research that brought lifelike skin to animated characters and was later used in many Hollywood block busters, including “Lord of the Rings.” “Cloth in movies and games often looks wrong, and this model is the first practical way of controlling the appearance of most types of cloth in a realistic way.”

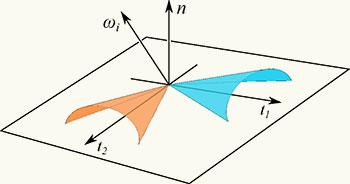

The model treats the fabric as a mesh of microcylinders oriented at 90 degrees from each other.

The model is based on a novel approach that simulates the interaction of light with cloth by simulating how each thread scatters light. The model then uses that information based on the fabric’s weaving pattern. “It essentially treats the fabric as a mesh of interwoven microcylinders, which scatter light the same way as hair, but are oriented at 90 degrees from each other,” Sadeghi said.

Sadeghi is an expert on the subject of simulating light interacting with hair. While a Ph.D. student in Jensen’s research group, he developed a model that does just that and that was later used in Disney’s “Tangled,” a retelling of the Brothers Grimm fairy tale Rapunzel. The animated movie’s main character sported 70 feet of simulated blond hair.

"In addition to faithfully reproducing the appearance of existing fabrics, our model can act as a framework to visualize what new fabrics would look like. We can simulate any combination of weaving pattern and thread types,” said Oleg Bisker, who co-authored the paper as part of his master’s thesis on measuring and modeling light scattering from threads.

Sadeghi and Bisker presented the work at SIGGRAPH and fielded many questions from researchers in the game and movie industries. “We expect that our model will be used in many production pipelines soon,” Sadeghi added.

Sadeghi and colleagues used the model to simulate the appearance of a very complex fabric for the first time—more specifically a polyester satin charmeuse. That fabric is particularly tricky to render, because of its unusual weaving pattern, which gives it a different appearance depending on what direction and what side it is observed from. For example, in one direction, the satin charmeuse has three mirror-like highlights on the front side of the fabric and four on the back.

Image of different types of fabrics simulated by using the model Sadeghi and colleagues developed.

To gain a deeper understanding, Sadeghi and colleagues took photographs of fabrics and even measured the scattering properties of single threads. The researchers had their “a-ha!” moment for developing the model while looking at the fabric’s weaving patterns under a microscope. That’s when they realized that these patterns accounted for the way the light scattered on the fabrics, creating distinct highlights and overall appearance.

In their SIGGRAPH paper, the authors also simulated other types of fabric like plain linen and a silk crepe de chine. Their goal was to demonstrate the model’s ability to handle different types of thread and an unlimited variety of weaving patterns. The only other models that may be able to produce similar results to the one Sadeghi and colleagues developed put fabrics through a micro-CT-scan, an expensive and time-consuming procedure.

The other computer scientist working on the paper was Joachim De Deken, a master’s student, who took measurements of fabrics.

Share This:

You May Also Like

Stay in the Know

Keep up with all the latest from UC San Diego. Subscribe to the newsletter today.