Center for Visual Computing Has Major Presence at Upcoming Computer Vision Conference

Published Date

By:

- Doug Ramsey

Share This:

Article Content

VisComp faculty with papers at CVPR 2017 (l-r): CSE's Ravi Ramamoorthi, ECE's Nuno Vasconcelos, CSE's Hao Su and Manmohan Chandraker, CogSci's Zhuowen Tu

Computer vision researchers from the University of California San Diego will have a major presence at the International Conference on Computer Vision and Pattern Recognition (CVPR 2017), with 20 papers on the agenda featuring at least one co-author from UC San Diego. Considered the premier forum for computer vision researchers, the conference will take place July 21-26 in Honolulu, Hawaii.

Of those 20 papers, fully 17 feature faculty or students from the university’s Center for Visual Computing (VisComp). Among them, four were accepted to the main research track for full oral presentations (an honor given to only 2.65% of papers submitted). Another four papers were accepted for brief oral ‘spotlight’ presentations and the remaining papers are in connection with poster presentations.

Besides the five papers from VisComp’s newest member, Hao Su (see July 17 news release), the center also will benefit from the prolific work of CSE professor Manmohan Chandraker, who joined the faculty in 2016. Chandraker is a co-author on six papers in all.

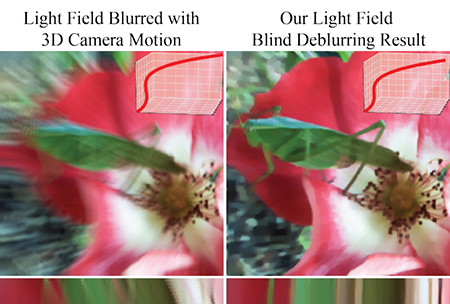

Images from Professor Ramamoorthi's paper showing an improvement in a deblurring technique to recover sharp light field and 3D camera motion path of real and synthetically-blurred light fields.

According to VisComp founding director Ravi Ramamoorthi, the arrival of new faculty is already being felt at CVPR and other vision-related conferences such as ICCV and ECCV. He noted that UC San Diego will have roughly the same number of papers at CVPR 2017 as a leading university which has roughly double the size of UC San Diego’s vision faculty.

During the conference Ramamoorthi will deliver the keynote talk to the Workshop on Light Fields for Computer Vision. He’ll open the July 26 workshop with a presentation on “Light Fields: From Shape Recovery to Sparse Reconstruction.” Light fields are also the subject of the professor’s primary paper to be presented in the research track of the main conference.

Oral Presentations

Ramamoorthi is the senior author on the paper “Light Field Blind Motion Deblurring.” His co-authors are both from UC Berkeley, where he taught prior to joining the CSE faculty at UC San Diego in 2014. First author Pratul P. Srinivasan is a Ph.D. student co-advised by Ramamoorthi and fellow co-author, UC Berkeley professor Ren Ng. The paper explores deblurring light fields of general 3D scenes captured under 3D camera motion. “We show the theoretical advantages of capturing a 4D light field instead of a conventional 2D image for motion deblurring, and derive simple methods of motion deblurring in certain cases,” noted Ramamoorthi. “We then present an algorithm to blindly deblur light fields of general scenes without any estimation of scene geometry, and demonstrate that we can recover both the sharp light field and the 3D camera motion path of real and synthetically-blurred light fields.”

Newly-arrived professor Hao Su is first author on two papers presented as oral presentations. “A Point Set Generation Network for 3D Object Reconstruction from a Single Image” focuses on deep learning for generating combinatorial data structures, notably geometrical point sets. A second paper, “PointNet: Deep Learning on Point Sets for 3D Classification and Segmentation”, discusses the creation of a neural network to directly consume an unordered point cloud as input without converting to other 3D representations first (such as voxel grids).

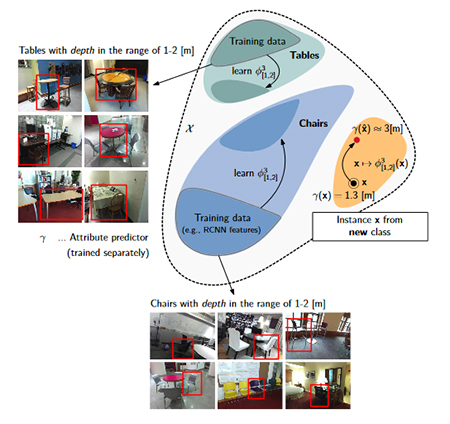

Object recognition technique called ‘attribute-guided augmentation” can generate artificial samples to extend a corpus of training data. Image from paper by first and senior authors Ph.D. student Mandar Dixit and professor Nuno Vasconcelos, respectively, both from ECE.

Another oral presentation at CVPR comes out of the Statistical Visual Computing Lab in the Electrical and Computer Engineering (ECE) department, led by ECE professor Nuno Vasconcelos, a VisComp member. ECE Ph.D. student Mandar Dixit and Vasconcelos are, respectively, first and senior authors on the paper, “AGA: Attribute-Guided Augmentation”, co-authored with University of Salzburg professor Roland Kwitt and University of North Carolina-Chapel Hill professor Marc Niethammer. As proposed by Vasconcelos and his colleagues, AGA learns a mapping that allows researchers to “synthesize data such that an attribute of a synthesized sample is at a desired value or strength and… is particularly interesting in situations where little data with no attribute annotation is available for learning, but we have access to a large external corpus of heavily annotated samples.” The authors’ experiments showed that AGA of high-level convolutional neural network features “considerably improves one-shot recognition performance” in the two examples of object recognition they used in their experiment.

Spotlight and Poster Presentations

ECE’s Vasconcelos also has a paper selected for a spotlight presentation on Tuesday,“Deep Learning with Low Precision by Half-Wave Gaussian Quantization”. ECE Ph.D. student Zhaowei Cai is first author, and he collaborated with Microsoft researcher Xiaodong He, Jian Sun of Megvii Inc., and senior author Vasconcelos. The paper, first posted on ArXiv in February, explores the problem of quantizing the activations of a deep neural network.

CSE Professor Manmohan Chandraker has two spotlight papers for brief oral presentation on Sunday, July 23:

- “DESIRE: Distant Future Prediction in Dynamic Scenes with Interacting Agents“, co-authored by Namhoon Lee, Wongun Choi, Paul Vernaza, Christopher B. Choy, Philip H. S. Torr, and Manmohan Chandraker. Two of the co-authors are former colleagues of Chandraker at NEC Labs, one from his postdoctoral work at Stanford, and two others from the University of Oxford.

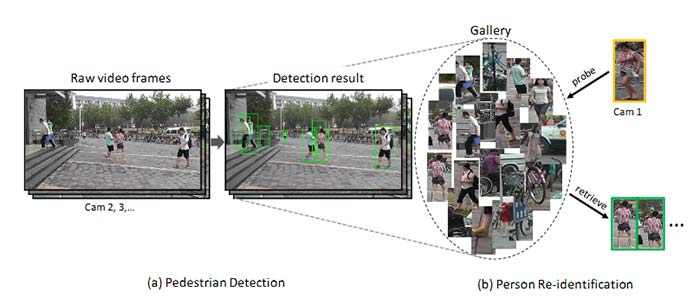

- “Person Re-Identification in the Wild”, co-authored by Liang Zheng, Hengheng Zhang, Shaoyan Sun, Manmohan Chandraker, Yi Yang, Qi Tian Chandraker’s co-authors are based at the University of Texas-San Antonio, University of Technology Sydney, and the University of Science and Technology of China. The paper presents a novel large-scale dataset and comprehensive baselines for end-to-end pedestrian detection and person recognition in raw video frames, including a pipeline for an end-to-end “person re-ID” system.

Pipeline of an end-to-end person re-ID system from Manmohan Chandraker’s paper on “Person Re-Identification in the Wild”.

One more spotlight presentation on the CVPR agenda is co-authored by new CSE professor Hao Su with former Stanford colleague Li Yi and the University of Hong Kong’s Xingwen Guo and Leonidas Guibas. Their paper, “SyncSpecCNN: Synchronized Spectral CNN for 3D Shape Segmentation”, explores a spectral representation-based neural network. The authors’ SyncSpecCNN network achieved state-of-the-art performance on various semantic annotation tasks, including 3D shape part segmentation and 3D keypoint prediction.

VisComp’s Ramamoorthi and Chandraker have a joint poster with graduate students Zhengqin Li and Zexiang Xu. The topic: “Robust Energy Minimization for BRDF-Invariant Shape from Light Fields”

Chandraker also has three other posters based on research papers, i.e.:

- “Deep Supervision With Shape Concepts for Occlusion-Aware 3D Object Parsing“

Chi Li, M. Zeeshan Zia, Quoc-Huy Tran, Xiang Yu, Gregory D. Hager, Manmohan Chandraker - “Deep Network Flow for Multi-Object Tracking“

Samuel Schulter, Paul Vernaza, Wongun Choi, Manmohan Chandraker - “Learning Random-Walk Label Propagation for Weakly-Supervised Semantic Segmentation“

Paul Vernaza, Manmohan Chandraker

VisComp has one current professor from Cognitive Science, Zhuowen Tu, with two posters on display:

- “Deeply Supervised Salient Object Detection With Short Connections“

Qibin Hou, Ming-Ming Cheng, Xiaowei Hu, Ali Borji, Zhuowen Tu, Philip H. S. Torr - “Aggregated Residual Transformations for Deep Neural Networks“

Saining Xie, Ross Girshick, Piotr Dollár, Zhuowen Tu, Kaiming He

ECE Ph.D. student Pedro Morgado has a joint poster with his advisor, Nuno Vasconcelos. In “Semantically Consistent Regularization for Zero-Shot Recognition“, Morgado notes significant improvements over the state of the art achieved on several datasets based on a new convolutional neural network (CNN) framework which proposes the use of semantics as constraints for recognition.

CSE recent arrival Hao Su will have two posters based on papers:

- “Learning Shape Abstractions by Assembling Volumetric Primitives,” which explores a learning-based approach for 3D design; and

- “Learning Non-Lambertian Object Intrinsics across ShapeNet Categories,” about inferring optical material properties from a single image of an object.

Other UC San Diego Research at CVPR

Other Jacobs School faculty with papers or keynotes at CVPR 2017 include CSE's Gary Cottrell and ECE's Tara Javidi, Joseph Ford and Mohan Trivedi

In addition to VisComp’s presence at the conference, other UC San Diego faculty will also be represented in Honolulu. CSE professor Gary Cottrell, his Ph.D. student Yufei Wang, and three researchers from Adobe Research will present their work on “Skeleton Key: Image Captioning by Skeleton-Attrbute Decomposition.”

ECE professor Tara Javidi has a spotlight paper on “Fully-Adaptive Feature Sharing in Multi-Task Networks with Applications in Person Attribute Classification”. Javidi’s co-authors include her Ph.D. student Yongxi Lu (who did an internship at IBM in 2016) and four researchers from IBM Research. Likewise, ECE professor Joseph Ford has a computational photography paper on “A Wide-Field-of-View Monocentric Light Field Camera”, jointly with ECE Ph.D. student Glenn Schuster as well as first author Donald G. Dansereau and Gordon Wetzstein, both from Stanford.

Also at CVPR 2017, ECE professor Mohan Trivedi is scheduled to give an invited talk at a July 21 Joint Workshop on Computer Vision in Vehicle Technology and Autonomous Driving Challenge. His topic: “Vision for Autonomous Vehicles that Humans Can Trust.”

Share This:

You May Also Like

Stay in the Know

Keep up with all the latest from UC San Diego. Subscribe to the newsletter today.