Overcoming Delays in Long-Distance Surgery

Published Date

By:

- Liezel Labios

Share This:

Article Content

An engineering-surgery team at UC San Diego is working to extend the reach of surgeons by allowing them to operate remotely on patients located across a city, country, or even the globe. This feat can be made possible with a technology called telesurgery. Telesurgery is not performed today, but is a promising goal for the future. Researchers are working to ensure that telesurgery can be done safely and efficiently.

Having the capability to perform remote telesurgery would open up several new realms of patient care. For example, it would allow patients in remote locations greater access to specialized surgeons, creating the possibility of collaborative multi-surgeon and multi-institutional operations, and even bringing surgical care to the front lines of acute trauma situations.

The major hurdle that stands in the way of realizing telesurgery is the signal delay when transmitting commands from a surgeon’s console to the robot at the patient’s bedside, and the video back to the surgeon.

“We can’t do surgery at long distances right now because there’s a delay when we try to command robots at another physical location. The delay confuses surgeons, it makes it hard to control things,” says Ryan Orosco, MD, Assistant Professor of Surgery in the Division of Head and Neck Surgery Oncology. “The signal delay is unavoidable, so we need creative engineering solutions to combat its effects.”

The delay is similar to turning on the shower and waiting for the water to warm up, explains Michael Yip, PhD, Assistant Professor of Electrical and Computer Engineering. “You have to wait a few seconds before you get the water to reach the right temperature. But imagine having to do this over and over for several hours—that’s what makes telesurgery impossible to do.”

Yip and Orosco teamed up on a project aimed at overcoming this delay. They are working on augmented reality (AR) systems that predict where instruments should go before they are moved and overlay these positions on a screen so a surgeon can observe, in real time, where their instruments essentially are without the effect of delay. The team is also working on visual-haptic feedback that predicts and displays how much force remotely controlled instrument are applying to tissues.

“Instead of relying on delayed visual feedback to see what actually happened, a surgeon can use augmented reality to predict what will happen and then operate in this predictive space. As the instruments move, the robot carries out the actions a bit later on, but the surgeon doesn’t see that effect. So it will feel a lot more comfortable and it will be significantly faster to do these procedures,” says Yip.

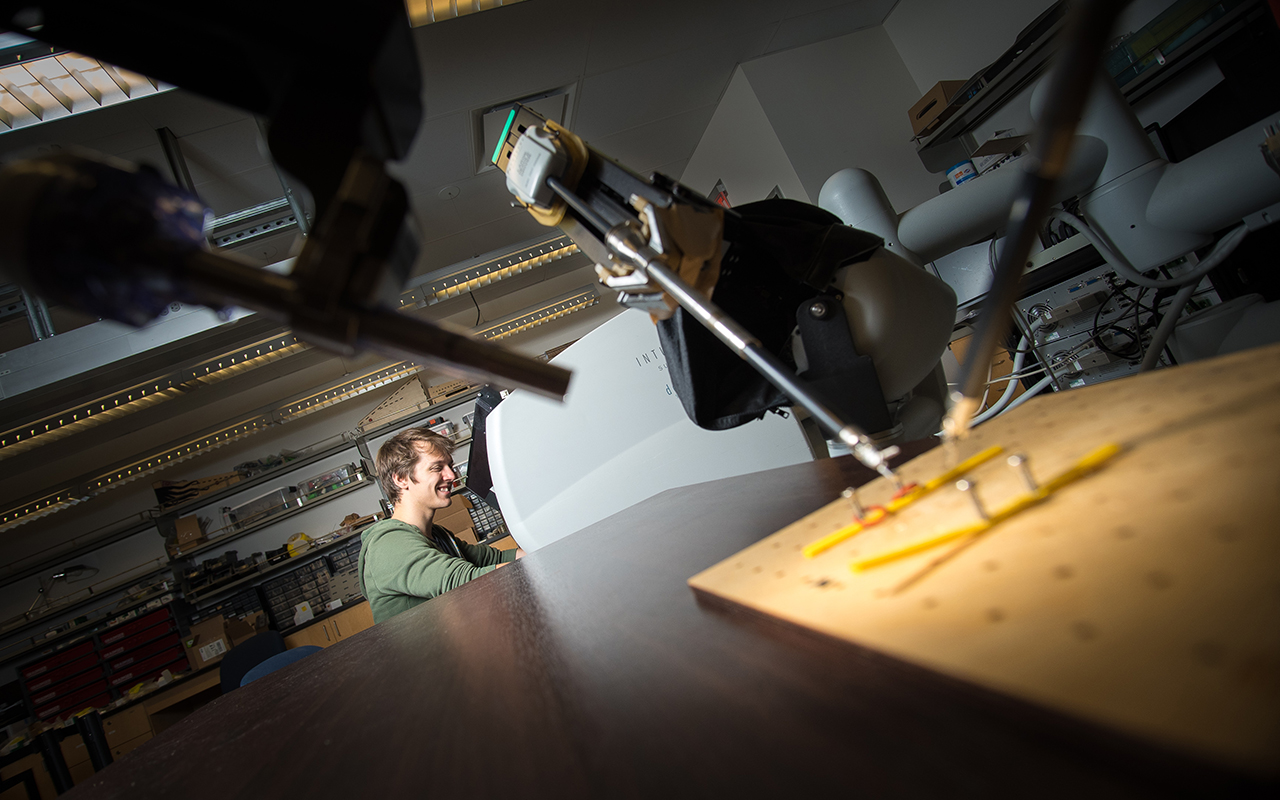

To test the AR system, researchers had 10 participants remotely control a da Vinci surgical robot while experiencing a one-second signal delay. Their task was to use one of the robot arms to pick up a ring from a peg, pass it to the second arm, and place it back down on another peg. When using the AR system, the users cut their time to complete the task by 19 percent.

"The cool thing about the system is that it was able to give the operators immediate feedback, so they were able to move faster and save time,” said Florian Richter, an electrical engineering PhD student in Yip’s lab. “It took participants some practice to get used to seeing the predictive overlay, but people quickly got accustomed to it and ended up naturally following the AR arms.”

The video below shows the AR system in use.

The team is working on improving the system’s accuracy and ensuring that it will be safe for clinical use in the future.

Researchers will present this work at the 2019 International Conference on Robotics and Automation (ICRA), May 20-24 in Montreal, Canada.

Share This:

You May Also Like

Stay in the Know

Keep up with all the latest from UC San Diego. Subscribe to the newsletter today.