Lightening the Data Center Energy Load

UC San Diego engineers earn ARPA grant to enable efficiency through photonics

Published Date

By:

- Katherine Connor

Share This:

Article Content

Electrical engineers and computer scientists at the University of California San Diego are on the front lines of global efforts to reduce the energy used by data centers. The potential impact is great: the US government estimates that data centers currently consume more than 2.5% of U.S. electricity. This figure is projected to double in about eight years due to the expected growth in data traffic.

The UC San Diego Jacobs School of Engineering team has been awarded a total of $7.5 million from the US Advanced Research Projects Agency-Energy (ARPA-E) and the California Energy Commission to advance nation-wide efforts to double data center energy efficiency in the next decade through deployment of new photonic— light based—network topologies.

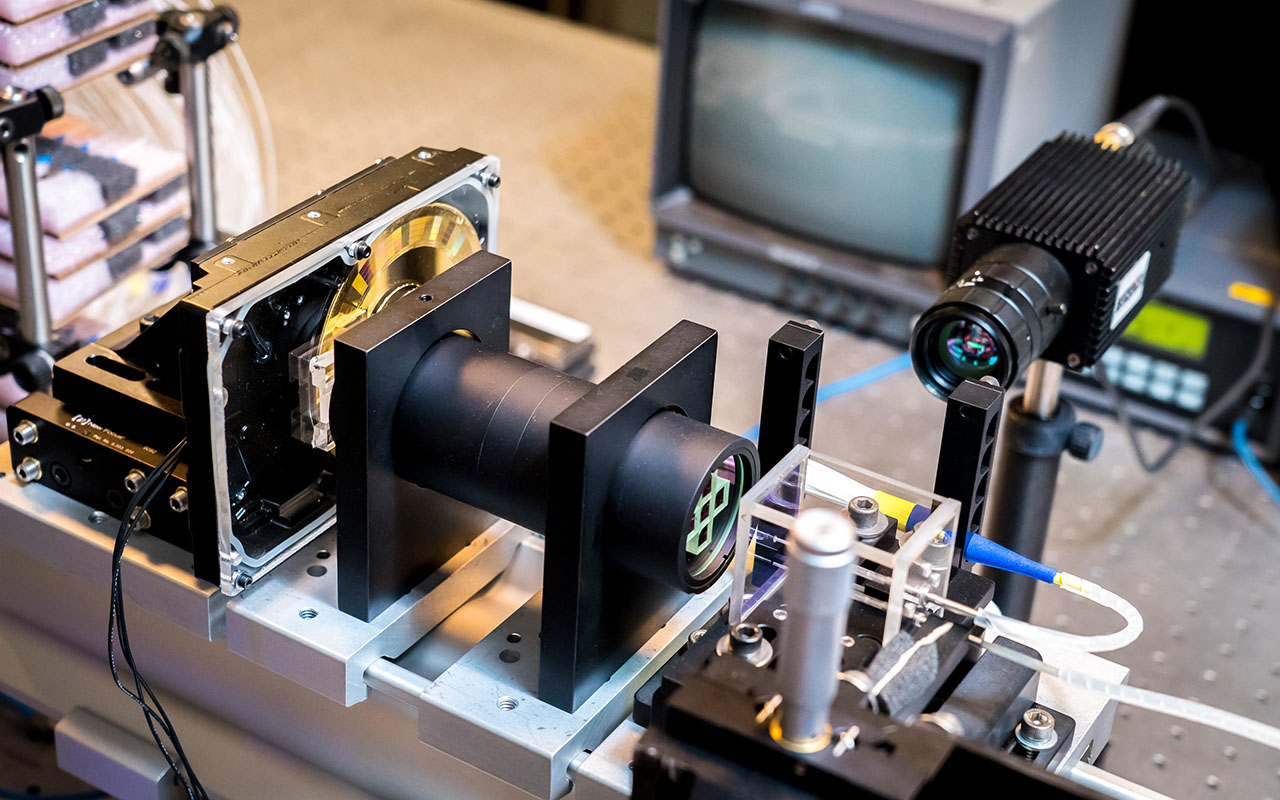

In particular, the UC San Diego team is focused on developing solutions to enable the thousands of computer servers within a data center to communicate with each other over advanced light and laser-based networks that replace existing electrical switches with optical switches developed within the ARPA-E program.

“The photonic devices we’re developing aren’t actually used within the servers per se: instead, the devices connect the servers within the datacenter network using a more efficient optical network,” said George Papen, a professor of electrical and computer engineering at UC San Diego and co-principal investigator on the project.

“By removing bottlenecks in the network, the computer servers, which account for the majority of power in the data center, operate more efficiently. Our project, supported by ARPA-E, aims to double the server power efficiency by transforming the network into a high-speed interconnect free of these bottlenecks,” said George Porter, a professor of computer science at UC San Diego and co-principal investigator.

What’s an optical switch?

So how do these data center networks pass bits of information and computation commands around today? They use a technology called electrical packet switching, in which a message is broken down into smaller groups, or packets of data. These packets of data are converted to electrical signals and sent through a cable to a network switch, where they’re routed to the desired location and pieced back together into the original message. Network switches are physical, electrical devices with ports for wired connections, that direct the flow of data from many machines.

Unlike electronic switches, optical switches aren’t bound by the limitations of electronics to transmit data. Instead, optical switches make direct “light path” connections from input ports to output ports. Since no conversion between optical and electrical data is required at every switch, optical switches don’t have the latency or electronic logjam issues that existing network switches have, and require less power to route data.

Using an optical network instead of an electrical network can produce a more efficient network with a larger data rate to each server. This can increase the energy efficiency of the servers, which consume most of the energy in a data center. One goal of the project is to demonstrate that the cost of such an optical network can drop below the cost of adding the additional semiconductor chips required to get the same data rate on existing electrical networks.

“It would be lower cost in part because you’re using less energy, but also in part because if you wanted to build a very high speed network using existing commercial technology, the cost of adding additional chips to build bigger switches increases dramatically,” said Porter. “It’s not a linear relationship of double-the-speed for double-the-money; you can think of it almost like double the speed for quadruple the cost. On the other hand, optics, at these very high speeds, follows a more linear cost relationship.”

Developing a proof-of-concept

In phase one of the Lightwave Energy Efficient Datacenters (LEED) project in the ARPA-E Enlitened program, which ran from 2017-2019, Papen, Porter and UC San Diego colleagues Joe Ford, a professor in the Department of Electrical and Computer Engineering, and Alex Snoeren, a professor in the Department of Computer Science and Engineering, developed the photonic technology and network architecture required to enable this scale of optical switching. The collaboration between electrical engineers—who designed a new type of optical switch—and computer scientists—who developed the protocol to allow it to work at a data center scale—was key.

Their success hinged on a new type of optical switch conceptualized by UC San Diego alumnus Max Mellette, co-founder and CEO of spinout company inFocus Networks. Instead of the full crossbar architecture that was previously used, which allows any node in the data center to talk to any other node, his idea was to create a switch that had more limited connectivity, thereby enabling faster speeds.

The key insight was to develop a network protocol that would enable this faster but less-connected architecture to communicate in a way that would still deliver the performance required. By working closely with computer scientists led by Porter and Snoeren, the team made it happen.

By the end of Phase 1, this new optical switch was functional, able to run applications and receive data in a testbed setting. Now in Phase 2, the team is working with collaborators at Sandia National Laboratories on scaling up the architecture to function with larger amounts of data and more nodes. The goal for Phase 2 is a realistic testbed demonstration that an optical network architecture provides significant value to end-users.

A storied history of photonics

There was a good reason this UC San Diego team was selected for the Enlitened program: it was here that, more than a decade ago, then-postdoc Porter was part of a research team also including Papen, that assembled and demonstrated the first data center testbed using an optically switched network. The paper describing this work has been cited more than 1,000 times.

Since then, Porter, Papen, Ford, Snoeren and colleagues in both the electrical engineering and computer science departments and the Center for Networked Systems have worked closely to further develop and refine the technology, and work towards making it a commercially viable reality.

“We were the first to show we could build testbeds with optically switched networks for data centers,” Porter said. “Papen and I have been meeting multiple times a week for 10 years, supervising students together, and working on this optical data center concept for a decade; it’s a real example of what can happen when computer scientists and electrical engineers work closely together.”

While their optical data center is still in the proof-of-concept phase, researchers agree there will come a time when the cost of adding more and more semiconductor chips to drive faster speeds simply won’t be cost competitive, putting aside the energy concerns. At that point, optical systems will become much more appealing. It’s hard to know when exactly that will be, but researchers predict it could be as soon as five years from now, and likely within 10.

“Nonetheless, there's so much work to do to be able to validate that indeed if we can’t continually scale chips, what applications should we first apply optical switching? That will take a significant effort to sort out,” Papen said. The researchers are working with national labs and private companies to test optical switches on various live applications to help answer this question.

Difficult, future-looking work such as this optical data center project is a perfect example of the role academic research institutions play in the innovation ecosystem.

“This is a really hard problem to solve, and hard problems take a long time,” Papen said. “Being able to devote the time to this, and collaborate with faculty and students from across the entire engineering school, is what makes this type of transformative development possible.”

Share This:

You May Also Like

Stay in the Know

Keep up with all the latest from UC San Diego. Subscribe to the newsletter today.